Technical SEO refers to the process of optimizing the infrastructure of a website for crawling and indexing by search engines. It boosts your website’s visibility and helps you rank higher in search results.

It includes strategies that improve website speed, enhance security, streamline site navigation and ensure mobile compatibility.

These actions make it easier for search engine bots to understand your website’s content and structure, thereby improving the likelihood of ranking higher on Search Engine Results Pages (SERPs).

Understanding Technical SEO is not about knowing how to code or design a website but about creating an environment where content can shine and reach its targeted audience. That is why we will go over the essentials of technical SEO so you can significantly improve your business.

Key Highlights

- Technical SEO is crucial for improving website visibility and user experience, impacting site speed, mobile optimization, website architecture, and security

- Site speed directly affects user experience and SEO

- Responsive design and mobile optimization are increasingly important for SEO, with search engines like Google implementing mobile-first indexing

- Structured data and schema markup enhance search engine understanding of your website content, leading to more detailed search result listings and better user engagement

- Implementing website security measures, including HTTPS and SSL certificates, protects your site from cyber threats and improves your site’s SEO performance

Understanding the Importance of Technical SEO

SEO is divided into three categories: On-page SEO, Off-page SEO, and Technical SEO.

- On-page SEO focuses on optimizing content for relevant keywords and improving user engagement

- Off-page SEO deals with building high-quality backlinks to improve website authority.

- Technical SEO forms the foundation upon which these strategies stand.

Technical SEO ensures that all your meticulous keyword research and content optimization efforts don’t go unnoticed.

With good technical SEO, your site becomes more navigable for search engine crawlers, resulting in better indexing, higher search rankings, and increased visibility. Therefore, overlooking technical SEO can harm your site’s performance and visibility.

Key Role in Improving Website Visibility and Search Engine Rankings

Technical SEO serves as the bridge between your website and search engines. Its key role is ensuring search engines can crawl, index, and understand your website content effectively.

This is a fundamental prerequisite for any site to rank higher on Search Engine Results Pages (SERPs).

Factors like site speed, mobile-friendliness, website architecture, and structured data significantly impact how search engines perceive and rank your website.

For instance, a slow-loading website can drive visitors away, negatively impacting the user experience and increasing the bounce rate.

Similarly, a non-mobile-friendly site can cause you to miss out on many mobile internet users, adversely affecting traffic and visibility.

Core Components of Technical SEO

Crawlability and Indexing

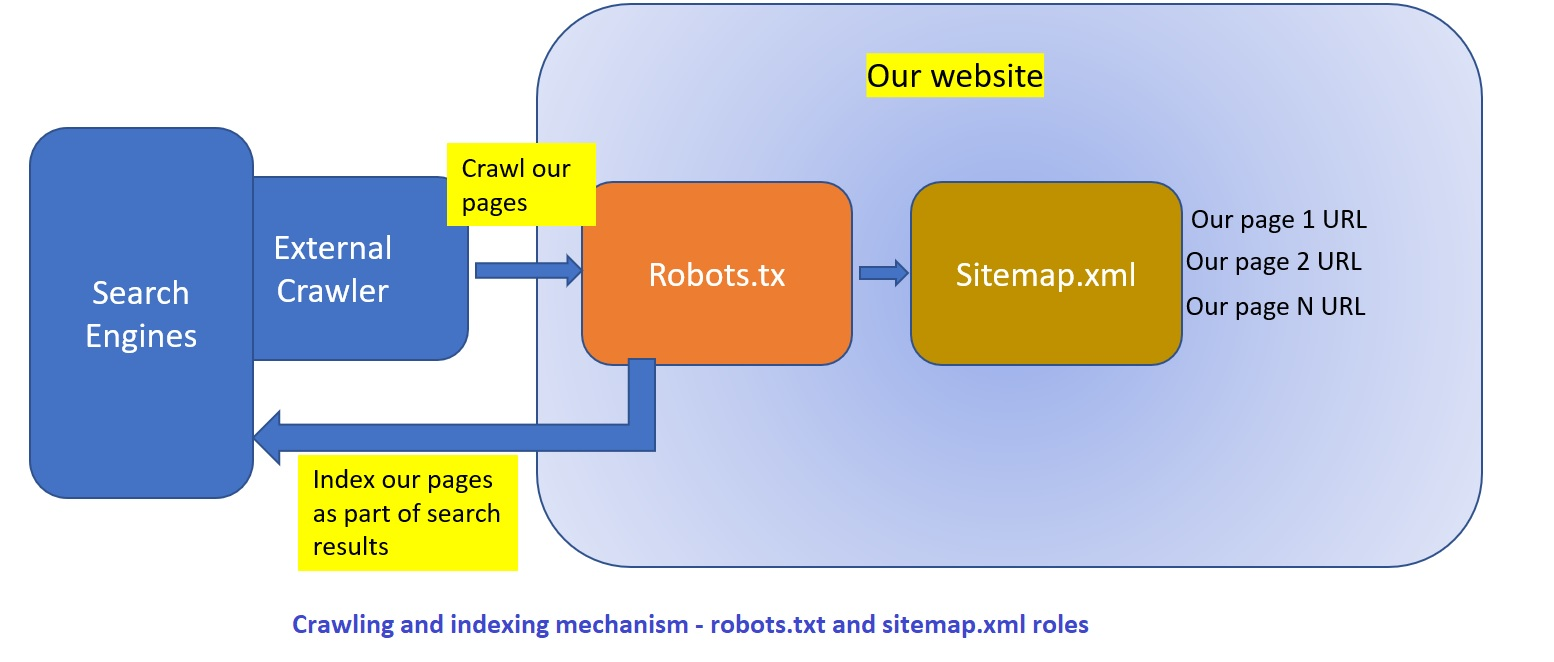

For search engines to rank your website, they first need to discover and understand your content. This process is known as crawling and indexing.

The more accessible your website is to search engine bots, the better it can be indexed and ranked.

You optimize crawlability by creating a robots.txt file, which guides search engines, instructing them on which pages to crawl and which to ignore.

Moreover, an XML sitemap can also improve crawlability, acting as a roadmap for search engines to find and understand your website’s content.

Site Speed and Performance

Site speed is a pivotal technical SEO factor significantly influencing user experience and rankings.

You can enhance site speed by:

- Optimizing your code

- Reducing the size of images

- Leveraging browser caching techniques

- Utilizing content delivery networks (CDNs) to distribute the load of delivering content

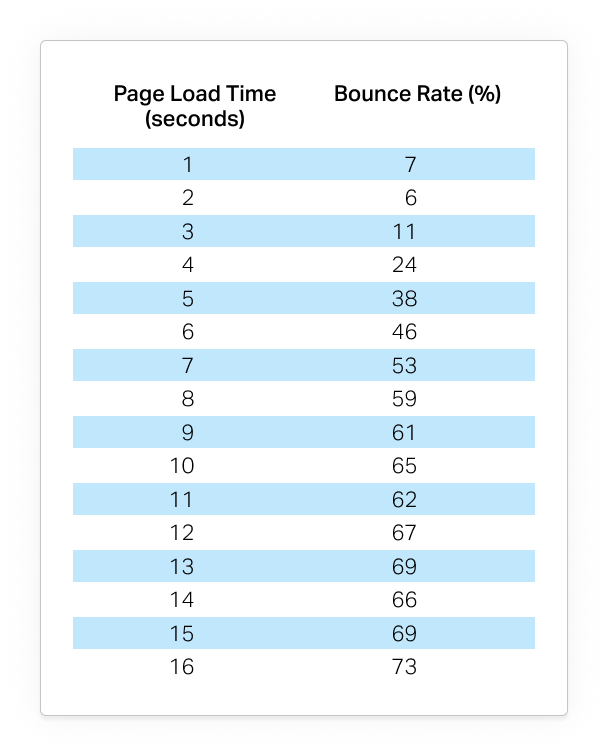

A delay of even a few seconds can increase bounce rates, thereby harming your SEO.

Mobile-Friendliness and Responsive Design

In an era where mobile devices are ubiquitous, having a mobile-friendly website is no longer optional.

A site that doesn’t render well on mobile devices can lead to a poor user experience and lower rankings on SERPs.

Responsive design is an approach to web design that makes your web pages look good on all devices (desktops, tablets, and phones).

By using flexible layouts and grids, images, and intelligent CSS media queries, you can ensure that your website provides a seamless user experience across different screen sizes and devices.

Website Architecture and URL Structure

A well-structured website allows users to navigate your site effortlessly and helps search engine crawlers understand your website’s content.

A logical site hierarchy, clear navigation, and user-friendly URL structures are best practices that enhance your site’s architecture.

A URL that accurately describes the content of the page is beneficial for user experience and helps search engines index your content accurately.

Structured Data and Schema Markup

Structured data refers to any data that is organized in a manner that makes it easily searchable by search engines.

By implementing schema markup, you can provide search engines with additional context about your site’s content, which helps them understand your content better.

This results in your content being featured in rich results, enhancing visibility, and improving click-through rates.

XML Sitemaps and Robots.txt

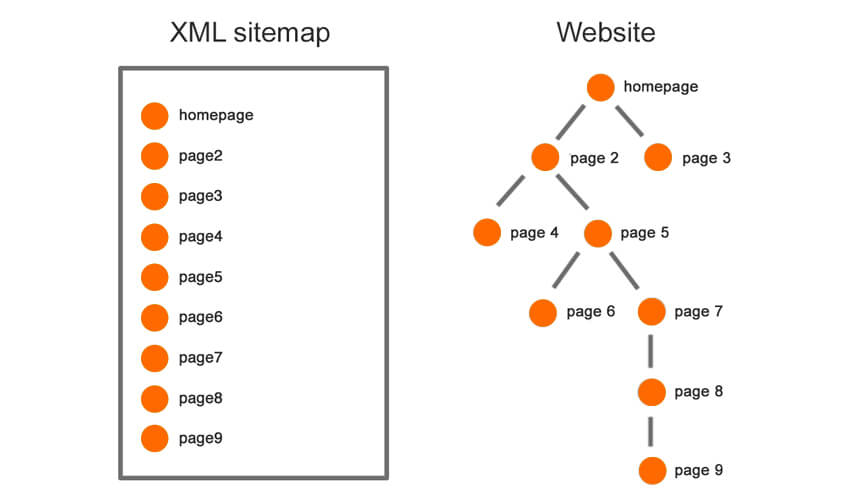

XML sitemaps are essentially a roadmap of your website that guides search engines to all your important pages.

On the other hand, a robots.txt file tells search engines which parts of your website they should or shouldn’t crawl, thereby preventing them from wasting time crawling irrelevant or duplicate pages.

HTTPS and Website Security

Website security is crucial for gaining user trust and achieving higher search engine rankings.

An insecure website can drive away potential visitors and cause search engines to deem your site less trustworthy.

By implementing HTTPS and installing SSL certificates, you can encrypt data transmission, protect your site against security threats, and convey to users and search engines that your site is secure.

Crawlability and Indexing

Importance of Search Engine Crawlers

Search engines have automated software applications called crawlers or spiders. Their primary role is to scour the internet, follow links, discover new web pages, and index them for future retrieval.

When a user types a query into a search engine, the search engine sifts through its vast index of web pages to provide the most relevant results.

Therefore, the visibility of a website in search engine results heavily relies on the successful crawling and indexing of its web pages.

If your site is not accessible to search engine crawlers, it is essentially invisible to search engines and, hence, to your potential audience.

Optimizing Robots.txt File for Crawling

A robots.txt file serves as a set of instructions for search engine crawlers. Located at the root directory of a website, it tells crawlers which parts of the website they should crawl and which they should ignore.

While it does not enforce these directives, well-behaved bots tend to respect the instructions. A well-optimized robots.txt file can significantly aid in making your website more crawlable by steering search engine crawlers toward the important parts of your site and away from areas you do not want to be indexed, like duplicate pages or backend folders.

Creating a robots.txt file requires careful consideration to avoid unintentionally blocking critical pages. Use a simple syntax to instruct search engine bots, specifying user agents (the crawlers) and disallowing (or allowing) them from specific paths.

NOTE: The robots.txt file is publicly available, so avoid using it to hide sensitive information.

Utilizing XML Sitemaps for Indexation

An XML sitemap is like a roadmap of your website that guides search engines to all crucial pages on your site.

It provides a structured layout of your website, detailing the URLs and offering additional information about each page, such as when it was last updated, how frequently it changes, and how it relates to other URLs.

Creating and submitting an XML sitemap is critical for new websites and websites with many pages.

It assists search engines in understanding the structure and hierarchy of your website, ensuring that they can discover and index your pages more efficiently.

You can generate an XML sitemap using various online tools or plugins and then submit it to search engines via their respective webmaster tools.

Fixing Crawl Errors and Broken Links

Crawl errors occur when a search engine attempts to reach a page on your website but fails.

These errors can hinder search engines from crawling and indexing your website. This leads to reduced visibility on SERPs.

Similarly, broken links on your website create a poor user experience and can negatively impact your site’s SEO.

Regular website audits help you identify and fix crawl errors and broken links. Several SEO tools can assist with this process, providing comprehensive reports about crawl errors and broken links.

Once identified, you should promptly fix these issues by correcting the URLs, setting up proper redirects, or updating the links.

Canonicalization and URL Parameters

Canonicalization is a method used to handle duplicate content issues arising from multiple URLs pointing to the same or similar content.

By specifying a canonical URL, you signal to search engines which version of a page should be considered the original and should be indexed.

URL parameters, on the other hand, create multiple URLs for the same page content, leading to duplicate content issues.

Using canonical tags or implementing URL parameter handling in Google Search Console helps ensure search engines understand which version of a URL to crawl and index. This practice significantly improves your website’s crawling and indexing efficiency, leading to better search engine visibility.

Site Speed and Performance

Impact of Site Speed on User Experience and SEO

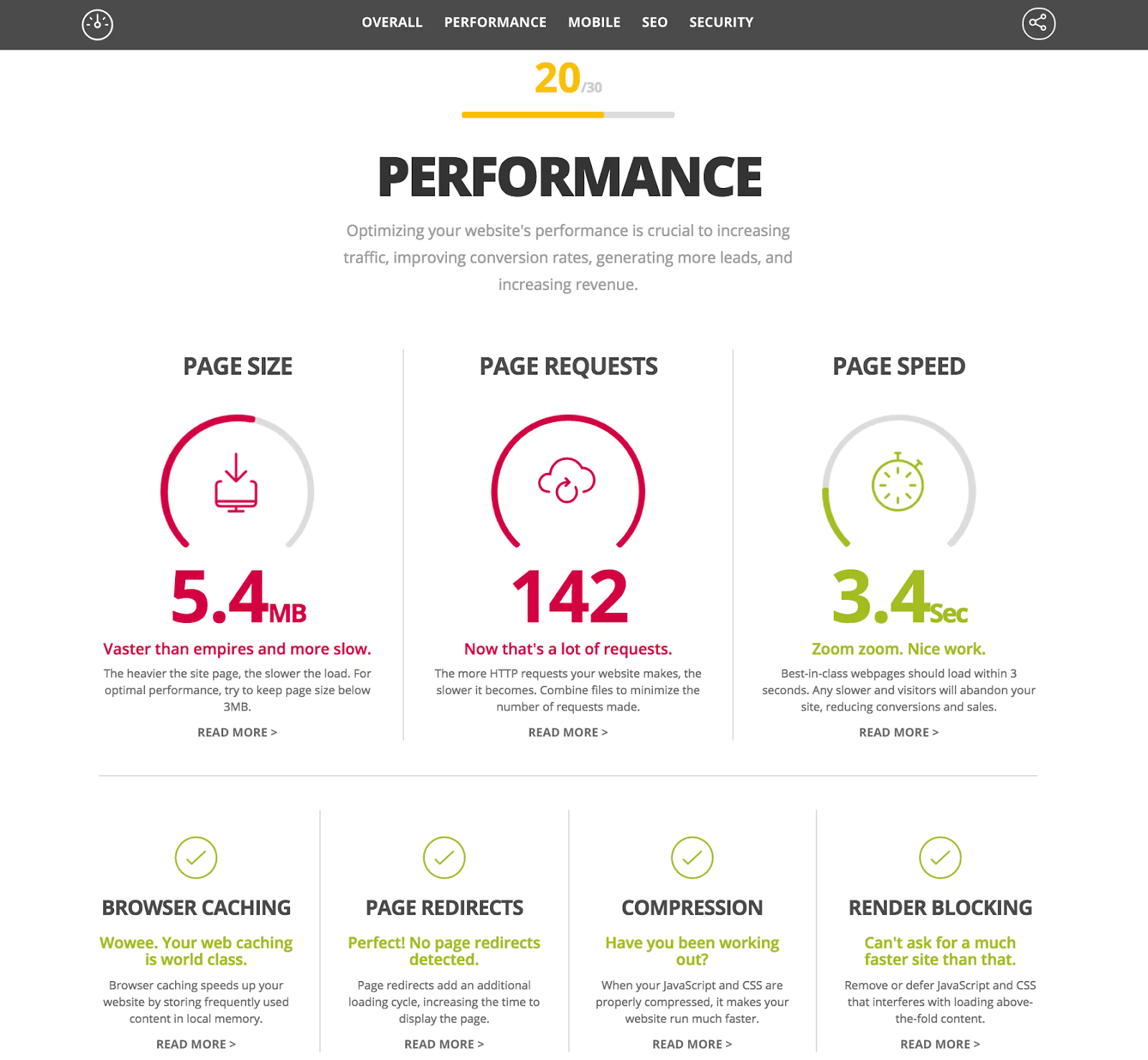

One of the most crucial aspects that directly influence a user’s experience on a website is its speed.

The time a webpage takes to load is pivotal in shaping a user’s first impression.

Users expect websites to load quickly and seamlessly. A delay of even a few seconds can lead to a loss of interest and a high bounce rate, adversely affecting user engagement and retention.

According to Pingdom, there is a 38% bounce rate when a page takes 5 seconds to load.

Notably, site speed also holds immense importance in the realm of SEO.

Google has acknowledged site speed as a ranking factor, further underlining its significance.

Websites that load quickly tend to rank higher in search engine results as search engines aim to promote sites that offer a better user experience.

Therefore, improving site speed can significantly contribute to higher search engine rankings, increased organic traffic, and enhanced user satisfaction.

Tools for Measuring and Improving Site Speed

Regularly monitoring your site’s speed and performance is essential to maintaining an optimal user experience and robust SEO.

Fortunately, several tools can help measure site speed and identify potential areas for improvement.

Google’s PageSpeed Insights is one such tool that provides a comprehensive analysis of your site’s performance on both desktop and mobile platforms. It measures site speed and offers recommendations for enhancements.

Another popular tool is GTmetrix, which provides a detailed report on your website’s speed performance using a combination of metrics from Google PageSpeed Insights and YSlow.

Regularly leveraging these tools can help ensure your website maintains optimal speed, improving user experience and higher search engine rankings.

Optimizing Code, CSS, and JavaScript

Another significant aspect of improving site speed is optimizing your website’s code.

This includes your HTML, CSS, and JavaScript files. Large, bloated files can slow down your website as they require more time to load.

By minifying these files, you remove unnecessary characters (like white space and comments), reduce their size, and thus improve site speed.

Compression techniques, such as Gzip, can also further reduce file sizes. Additionally, removing unnecessary or unused code can streamline your files and boost your website’s loading speed.

Caching and Content Delivery Networks (CDNs)

Caching is a technique that stores copies of static website content (like images, JavaScript, and CSS files) to reduce server load and response time.

When a user visits a website, the cached version of the webpage is served unless it has changed since the last cache. This significantly improves site speed and provides a better user experience.

On the other hand, a Content Delivery Network (CDN) is a geographically distributed network of servers that work together to deliver internet content quickly.

CDNs store cached versions of your website on multiple servers worldwide, ensuring that your content is close to your users no matter where they are. This minimizes latency and helps your website load faster.

Minimizing HTTP Requests and File Sizes

Every time a browser fetches a file (an image, a CSS file, or a JavaScript file) from a server, it makes an HTTP (Hypertext Transfer Protocol) request.

More HTTP requests mean more data the user’s browser needs to download, slowing down your website.

Strategies for minimizing HTTP requests include combining CSS and JavaScript files, using image sprites, and implementing lazy loading for images.

In addition to reducing HTTP requests, minimize file sizes. Techniques for reducing file sizes include compressing images, utilizing efficient file formats (like WebP for images), and optimizing multimedia content.

Smaller file sizes mean faster download times, leading to faster site speed and improved user experience.

Mobile-Friendliness and Responsive Design

Mobile-First Indexing and Its Significance

As the digital landscape evolves, mobile devices have become integral to internet browsing.

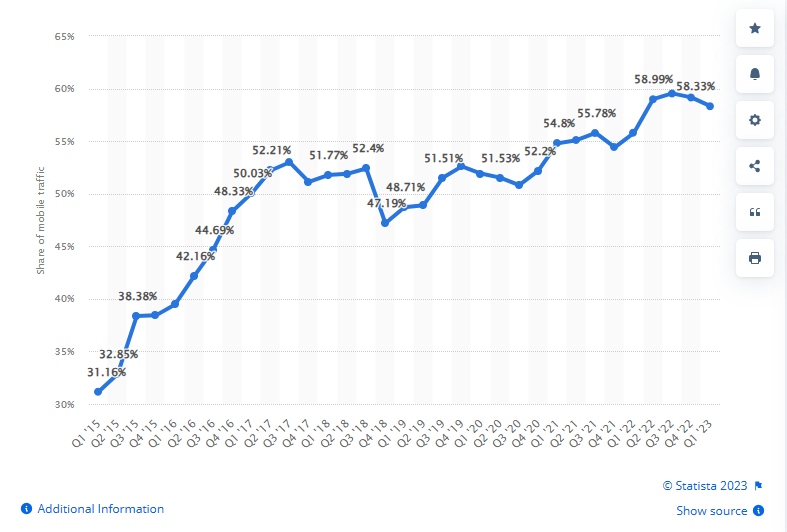

According to Statista, in the first quarter of 2021, mobile devices generated 54.8% of global website traffic.

Recognizing this shift, search engines like Google have adopted a mobile-first indexing approach.

This approach prioritizes the mobile version of a website for indexing and ranking. This means Google predominantly uses the mobile version of the content for indexing and ranking. If your site isn’t optimized for mobile, this could negatively impact your rankings.

Implementing Responsive Design Principles

Responsive design is a web design and development technique that creates a site or system that reacts to a user’s screen size. It aims to provide an optimal viewing experience across various devices, from desktop computers to tablets to mobile phones.

The main principles of responsive design include fluid grids, flexible images, and media queries. Fluid grids allow layout elements to resize in relation to one another, providing a more streamlined scaling process.

Optimizing for Different Screen Sizes and Devices

With the variety of devices available today, your website must function optimally on desktops, smartphones, and tablets.

This involves designing touch-friendly interfaces, considering the user context, and using responsive breakpoints.

Responsive breakpoints are the point at which a website’s content and design will adapt in a certain way to provide the best possible user experience.

For instance, you might have one design for screens less than 480 pixels wide (for mobile phones) and another design for screens more than 800 pixels wide (for desktops).

Testing and Improving Mobile Usability

Just as important as implementing mobile-friendly design is testing your website’s mobile usability. This process helps identify potential issues that might hamper a user’s experience on mobile devices.

Tools like Google’s Mobile-Friendly Test can provide insights into how well your site works on mobile devices.

Website Architecture and URL Structure

Importance of a Well-Organized Website Structure

For users, a logically structured, easy-to-navigate website enhances their experience and makes finding the information they need simple.

This can lead to longer site visits, lower bounce rates, and higher conversions.

From an SEO perspective, a clear and intuitive site structure allows search engines to crawl your site more efficiently.

A sound hierarchical structure lets search engines understand the relationships between various pages and the overall content hierarchy. This understanding can improve your website’s visibility and rankings in search engine results.

Optimizing Navigation and Internal Linking

This involves using intuitive menus, clear labels, and a logical hierarchical structure.

For instance, your main menu should include your most important pages, and each page should be categorized under relevant headers.

Internal linking is another essential aspect of website navigation. It involves linking one page of your website to another, establishing relationships between pages, and distributing link equity throughout your site.

Effective internal linking can guide users to high-value, related content, encouraging them to spend more time on your site. It also helps search engines discover your content and understand the context and relationship between different pages.

URL Optimization and URL Parameters

URL optimization plays a significant role in enhancing user experience and search engine visibility.

URLs are a significant ranking factor, and short URLs tend to rank higher in Google’s search results.

An SEO-friendly URL is easy to read and understand and gives an idea of the page content. This helps users determine whether a particular page is relevant to their search.

Creating SEO-friendly URLs involves using relevant keywords that reflect the content, avoiding excessive parameters, and using hyphens instead of underscores for word separation.

Moreover, it’s best to keep your URLs as concise as possible. Shorter URLs are easier for users to copy, paste, share, and remember.

Breadcrumb Navigation and User Experience

Breadcrumb navigation is a secondary navigation system that shows a user’s location on a website or web application.

The term comes from the Hansel and Gretel fairy tale, where the main characters create a trail of breadcrumbs to track back their path.

Breadcrumb navigation can significantly enhance user experience as it provides a quick way for users to understand the layout of your website. It helps users navigate back to higher-level pages and gives them a clear sense of their current location.

Structured Data and Schema Markup

Definition and Benefits of Structured Data

Structured data refers to any data organized in a specific, predefined manner.

In terms of SEO, structured data is a standardized format for providing additional information about a webpage. This information helps search engines better understand the content of the page, and it can be used to enhance the site’s listing in search results, leading to improved visibility and click-through rates.

The benefits of structured data are manifold. It can enhance search engine visibility as search engines can better understand and index your website content.

Structured data also allows search engines to generate rich search results, such as rich snippets or Knowledge Graph entries, which can significantly improve click-through rates. It also allows for more accurate targeting of user queries, potentially leading to increased organic traffic.

Utilizing Schema Markup for Search Engines

Schema markup is a specific type of structured data vocabulary understood by major search engines like Google, Bing, and Yahoo.

It helps search engines interpret the information on your web pages more effectively to serve relevant results to users based on their queries.

When you add schema markup to your webpage, you’re enhancing how your page is displayed in SERPs by providing precise information to the search engines. This can result in improved rankings and increased visibility for your website.

Websites using Schema markup tend to rank four positions higher in search results compared to those not using it. These websites also have a 36% higher click-through rate.

Types of Structured Data and Their Applications

Several types of structured data can be utilized depending on the content of your webpage. These include:

- Product: This is used for product information, including price, availability, and review ratings. It can result in rich product results and increased click-through rates.

- Article: This is used for news, blog posts, and sports article pages. It can result in rich results and carousels, making the page more attractive to users.

- Recipe: This is used for recipes. It can provide calories, preparation time, and review ratings.

- Event: This is for event information, such as location, date, and availability. It can result in rich results detailing the event, increasing visibility and user engagement.

- Local Business: This is used for local businesses. It can provide details such as address, opening hours, and contact information, making it easier for users to find the necessary information.

Tools for Implementing Structured Data

Several tools are available to assist in implementing schema markup, including Google’s Structured Data Testing Tool and Schema.org’s Markup Validator.

These tools can validate your markup and provide suggestions for improvement.

Adding schema markup to web pages can be done manually or with plugins or extensions available for popular content management systems.

The process involves adding the relevant schema markup to your HTML, ensuring the data added is accurate and reflects the content of your page. Once implemented, you should regularly test and validate your structured data to ensure it’s working correctly and optimized for the best results.

XML Sitemaps and Robots.txt

Creatingand Submitting XML Sitemaps

Creating an XML sitemap involves including all of the important pages on your website, prioritizing URLs based on their relevance, and keeping the sitemap updated to reflect any changes to your site.

There are numerous online tools and plugins available that can assist in generating an XML sitemap.

Once you’ve created an XML sitemap, you’ll need to submit it to search engines.

For instance, if you want to submit your sitemap to Google, you would do so through Google Search Console. By submitting your XML sitemap, you’re ensuring that Google has the most accurate and up-to-date information about your site, which can lead to more effective indexing.

Importance of Robots.txt File

The robots.txt file controls how search engine crawlers interact with your website.

It instructs these crawlers on which parts of your website to crawl and which parts to ignore.

This is useful for preventing search engines from indexing sensitive pages, such as user account pages, or irrelevant pages, like duplicate content, which doesn’t provide value to users.

Configuring Robots.txt for Optimal Crawling

Optimizing your robots.txt file involves carefully configuring it to ensure search engine crawlers can access important pages while ignoring irrelevant or sensitive ones.

Key best practices include:

- Allowing access to key pages and resources that support your main content

- Blocking access to duplicate or irrelevant pages

- Being aware of common pitfalls, like inadvertently blocking your entire site

Your robots.txt file should be structured correctly, with user-agent directives followed by disallow or allow directives.

It’s important to know that each search engine crawler might interpret the directives differently, so it’s crucial to test your robots.txt file using tools like Google’s robots.txt Tester.

Common Mistakes and Best Practices

Some common mistakes when managing XML sitemaps and robots.txt files include blocking important pages that should be crawled and indexed, neglecting to update your sitemap as your site changes, or inadvertently blocking search engines from accessing your site entirely.

Best practices involve regularly updating and checking your XML sitemap and robots.txt file to ensure they function as intended.

It’s also crucial to use specific directives in your robots.txt file that align with your SEO strategy.

Additionally, testing your robots.txt file for errors or issues can prevent problems before they impact your site’s visibility in search results.

HTTPS and Website Security

Importance of Secure Websites for SEO

Website security has become a significant search engine optimization (SEO) factor.

Search engines, especially Google, prioritize secure websites in their rankings. This preference is a part of their commitment to provide users with the safest web browsing experience possible.

Websites secured with HTTPS (Hypertext Transfer Protocol Secure) rank higher in search results, all other things being equal. The advantages of having a secure website extend beyond just improved rankings.

A secure website can help build user trust, ensuring visitors keep their information confidential and protected. Moreover, HTTPS protects against cyber threats, safeguarding your website’s data integrity and the privacy of its users.

Implementing HTTPS and SSL Certificates

HTTPS, or Hypertext Transfer Protocol Secure, is the secure version of HTTP, the protocol for sending data between a user’s browser and the website they are connected to.

The ‘S’ in HTTPS stands for ‘Secure’ and indicates that all communication between the browser and the website is encrypted, thus protecting the integrity and confidentiality of data exchanged.

Implementing HTTPS involves acquiring and installing an SSL (Secure Sockets Layer) certificate.

The SSL certificate creates a secure, encrypted connection between the server hosting the website and the user’s browser.

The steps to implementing HTTPS vary depending on your hosting provider, but the general process includes the following:

- Purchase or obtain a free SSL certificate from a trusted Certificate Authority.

- Install and configure the SSL certificate on your website’s hosting account.

- Update your website’s URL structure to use HTTPS and set up a 301 redirect from HTTP to HTTPS.

- Verify that your website is accessible via HTTPS and that all resources (CSS, images, JavaScript, etc.) are loaded over HTTPS.

Handling Mixed Content Issues

Mixed content occurs when initial HTML is loaded over a secure HTTPS connection, but other resources (such as images, videos, stylesheets, and scripts) are loaded over an insecure HTTP connection.

This is problematic because the insecure content can be tampered with, compromising the security of the entire page.

To handle mixed content issues, you should identify all the HTTP content on your website and replace it with HTTPS content. Various tools can help identify mixed content, including browser console warnings, security scanners, and SSL checkers.

Protecting Against Security Threats and Hacking

Website security threats like malware, hacking, and data breaches can severely damage a website’s reputation, user experience, and SEO efforts.

Consequently, it’s crucial to take steps to safeguard against these risks.

Here are some essential tips for enhancing website security:

- Keep all software, platforms, and plugins up-to-date with the latest versions and security patches.

- Implement strong, unique passwords and change them regularly.

- Regularly back up your website data to recover it in case of a breach or attack.

- Use security plugins and tools to monitor your website for any suspicious activity.

- Regularly scan your website for malware or other security threats.

Conclusion

The many facets of technical SEO are crucial in optimizing your website for users and search engines.

Technical SEO allows search engines to understand your website better, subsequently improving its rankings in search engine results pages (SERPs). Simultaneously, it enhances the user experience, encouraging more conversions and loyalty among your visitors.

As we’ve explored these components, it’s clear that implementing technical SEO is not a one-time affair. It requires ongoing attention and adjustments to accommodate evolving search engine algorithms and user behaviors.

Selecting the right hosting provider and platform is crucial to take your website to the next level. SEO-friendly web hosting can significantly influence your site’s performance, speed, and security — all vital components of technical SEO.

Next Steps: What Now?

- Start by checking your site’s crawlability and indexing status using tools like Google Search Console.

- Evaluate your website’s speed and performance using tools like Google PageSpeed Insights, then identify and address any problem areas.

- Ensure your site is mobile-friendly and responsive for all devices, testing it using Google’s Mobile-Friendly Test tool.

- Review your website architecture and URL structure for optimal navigation and SEO-friendly URLs.

- Begin implementing structured data and schema markup on your site to enhance search engine visibility.

- Check your XML sitemaps and robots.txt files to ensure they aid search engine crawlers.

- Implement HTTPS and invest in an SSL certificate for your site to ensure security and user trust.